Analysis of ChatGPT's Answers to Software Engineering Questions.

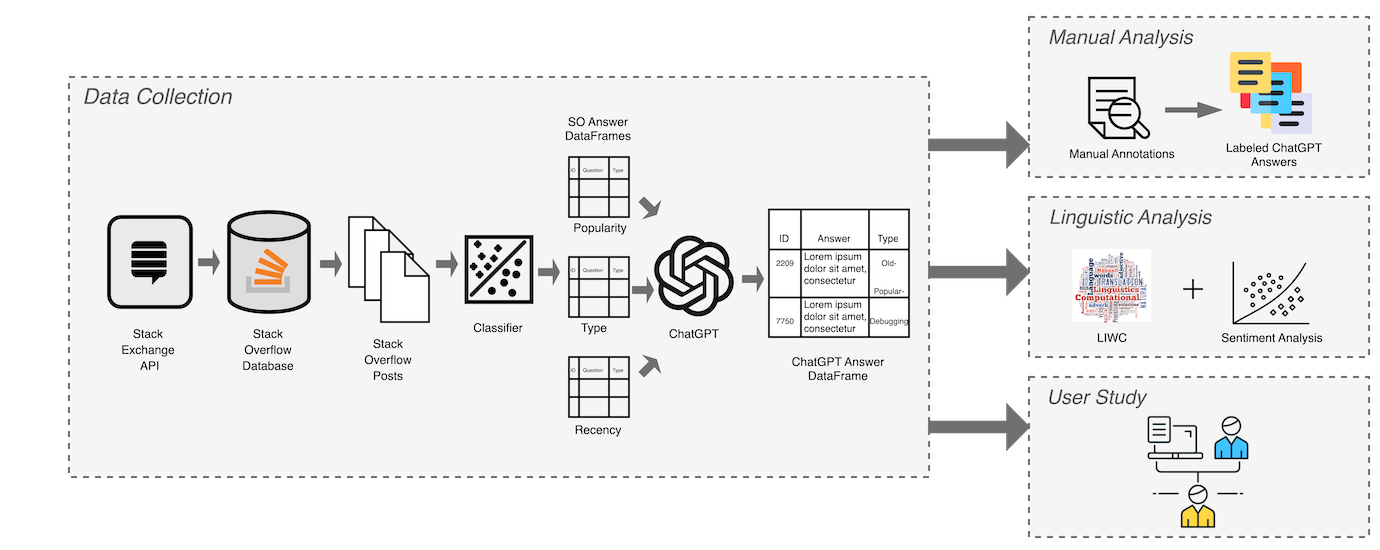

In this project, we conducted an in-depth manual analysis, a large-scale linguistic analysis, and a user study to empirically study the characteristics of ChatGPT’s answers to programming questions.